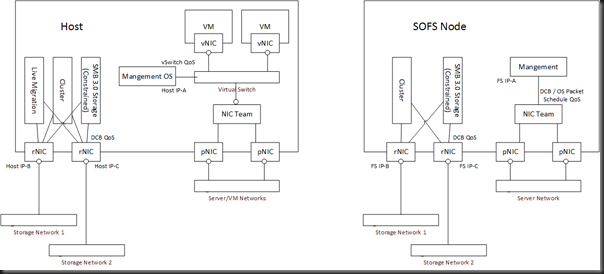

I recently posted a converged networks design for Hyper-V on SMB 3.0 storage with RDMA (SMB Direct). Guess what – it was *consultant speak alert* future proofed. Take a look at the design, particularly the rNICs (NICs that support RDMA) in the host(s):

There you have 2 non-teamed rNICs. They’re not teamed because RDMA is incompatible with teaming. They are using DCB because the OS packet scheduler cannot apply QoS rules to the “invisible” RDMA flow of data. The design accounts for SMB to the SOFS node, Cluster communications, and …. Live Migration.

That’s because in Windows Server 2012 R2 (and thus the free Hyper-V Server 2012 R2), we can use SMB Live Migration on 10 GbE or faster rNICs. That gives us:

- SMB Multichannel: The live migration will use both NICs, thus getting a larger share of the bandwidth. SMB Multichannel makes the lack of teaming irrelevant because of the dynamic discovery and fault tolerant nature of it.

- SMB Direct: Live Migration offloads to hardware meaning lower latency and less CPU utilization.

With 3 rNICs on PCI3 slots, the memory on my host could be the bottleneck in Live Migration speed ![]() In other words … damn!

In other words … damn!

What does it all mean? It means VMs with big RAM assignments can be moved in a reasonable time. It means that dense hosts can be vacated quickly.

What will adding SMB Live Migration cost you? Maybe nothing more than you were going to spend because this is all done using rNICs that you might already be purchasing for SMB 3.0 storage anyway. And hey, SMB 3.0 storage on Storage Spaces is way cheaper and better performing than block storage.

Oh, and thanks to QoS we get SLA enforcement for bandwidth + dynamic bursting for the other host communications such as SMB 3.0 to the Scale-Out File Server and cluster communications (where redirected IO can leverage SMB 3.0 between hosts too).

In other words … damn!

Hey Eric, is having faster vMotions/Live Migrations not worth while now? ![]()

One thing is I can’t figure out how do I force my Hyper-V servers to use the dedicated SMB NICs because the cluster would register in the DNS both may dedicated SMB NICs and management NIC(s) and when I do storage migration, my HV server takes first 2 NICs suitable to reach the SOFS cluster and usually that is my management NIC and one of my SMB NICs. I know I can limit live migrations to use particular NICs but I don’t see where I can set any NICs to be use for storage migration. Only way to force HV servers to use my dedicated SMB NICs is to disable file sharing on management NICs on the SOFS cluster. Any thoughts on this?

Look at SMB Multichannel contraints if you’re (or want to) using SMB Multichannel.

Why do is the Hyper-V host connected to the Storage networks and not connected to the SOFS cluster?

Seriously?!?!?! Read the above.

Aiden, I used SMBMultichannelConstraints on both SMBServer (SOFS) and SMBClient (HyperV Nodes) and the SMB multichannel does not seem to work on clustered SOFS. I have patched and hotfixed everything but no luck. What am I missing?

BTW. 4 x IB coonections per host on separate segments.